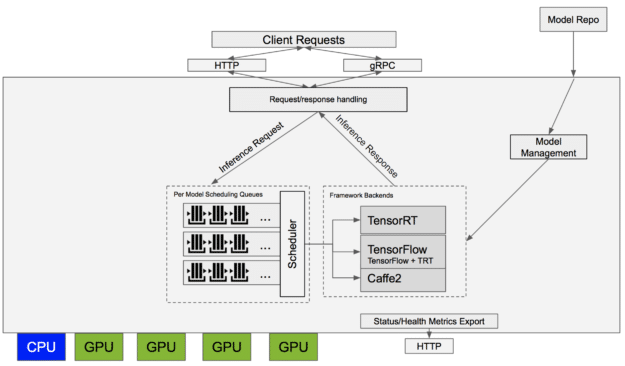

Deploying and Scaling AI Applications with the NVIDIA TensorRT Inference Server on Kubernetes - YouTube

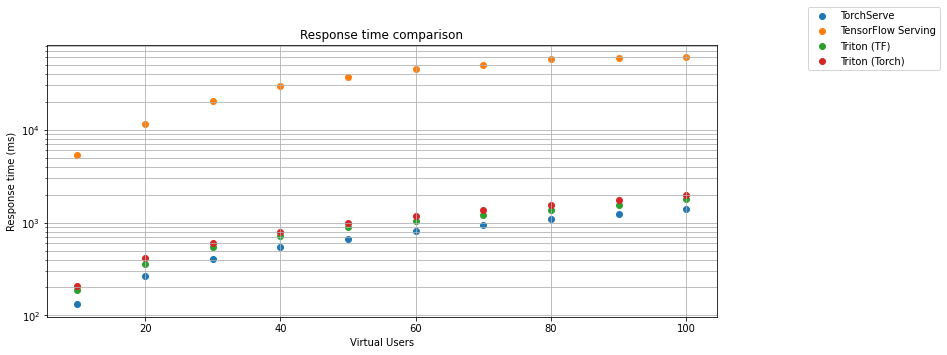

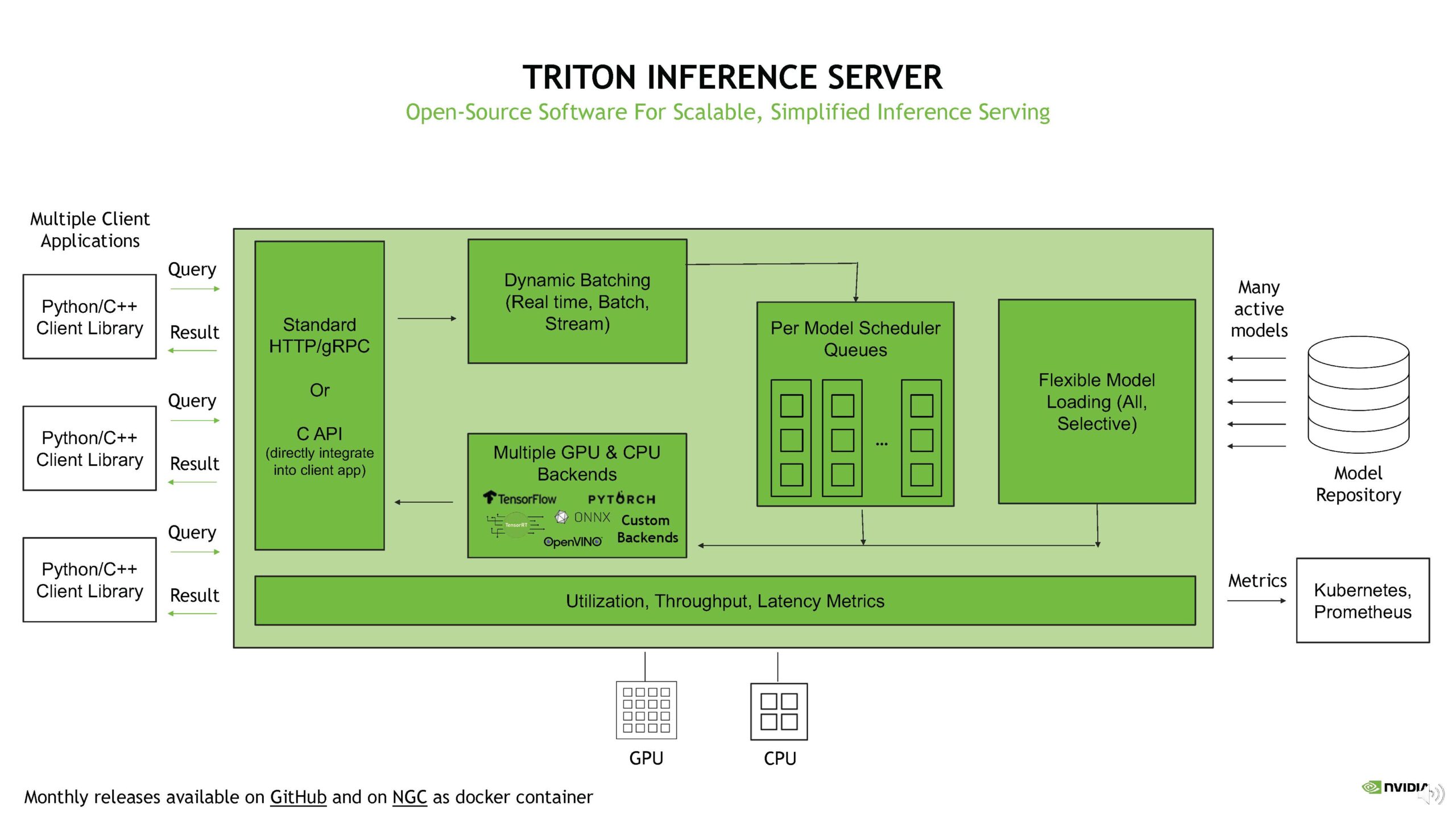

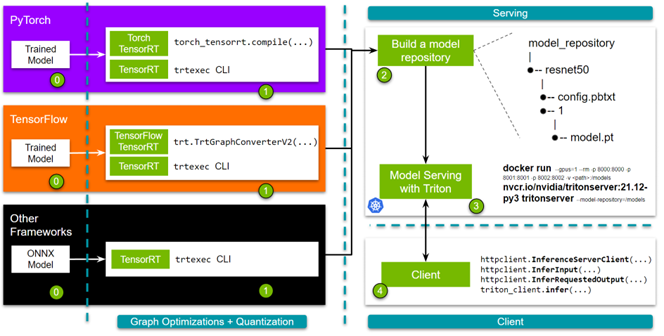

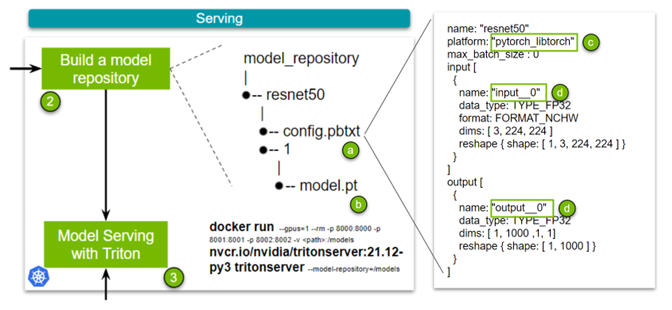

Deploying PyTorch Models with Nvidia Triton Inference Server | by Ram Vegiraju | Towards Data Science

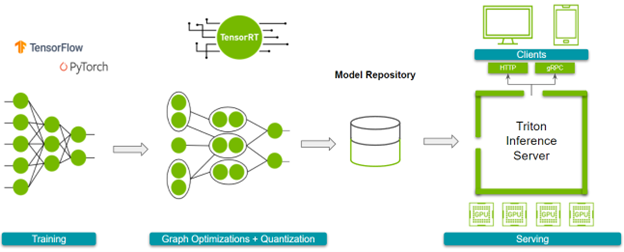

Building a Scaleable Deep Learning Serving Environment for Keras Models Using NVIDIA TensorRT Server and Google Cloud

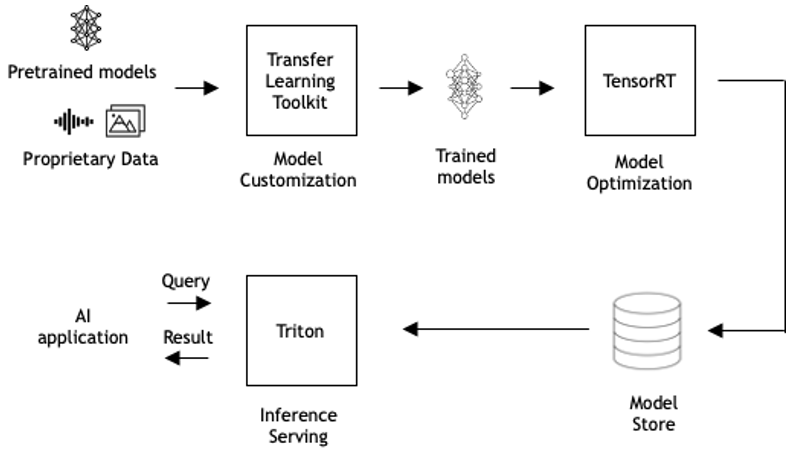

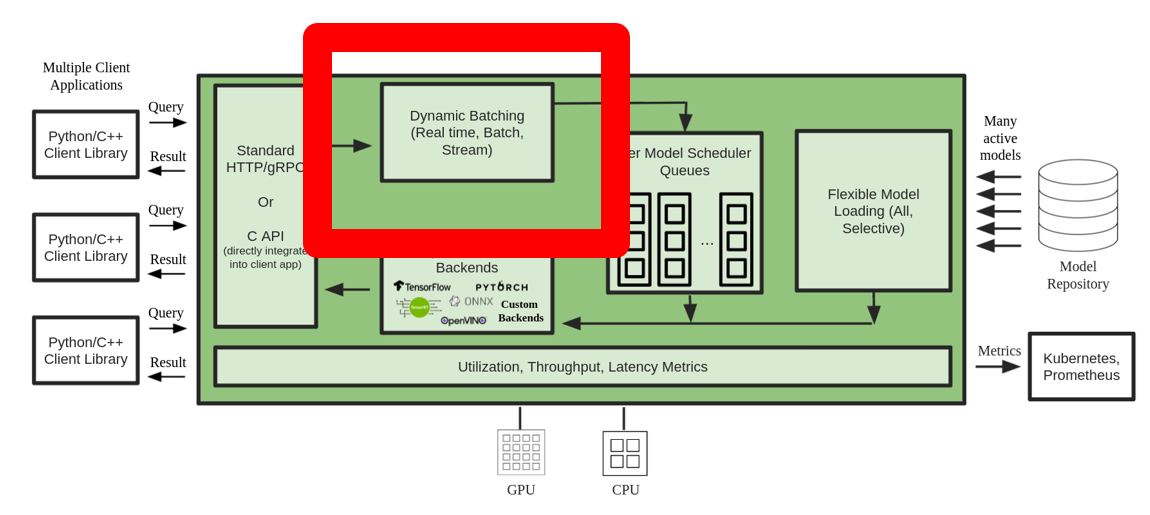

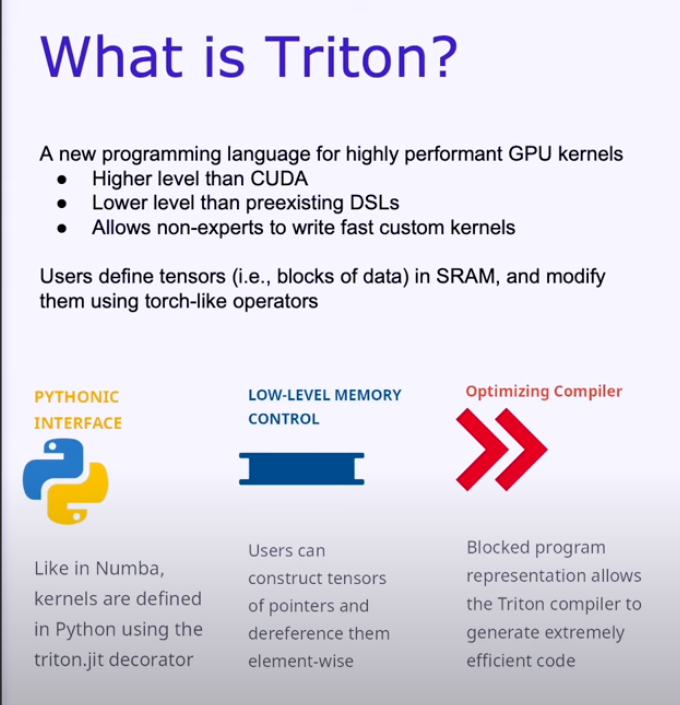

From Research to Production I: Efficient Model Deployment with Triton Inference Server | by Kerem Yildirir | Oct, 2023 | Make It New

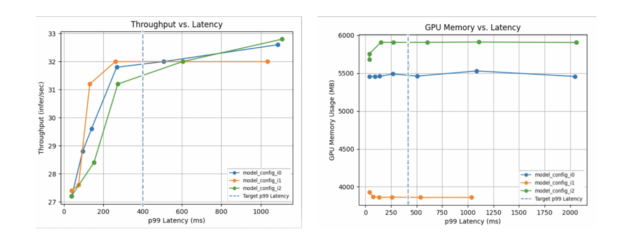

Achieve hyperscale performance for model serving using NVIDIA Triton Inference Server on Amazon SageMaker | AWS Machine Learning Blog

_01.jpg?width=600&name=TensorRT-and-Pytorch-benchmark-(batch-size-32)_01.jpg)